Building AI Systems That Don’t Break: A Realistic 2026 Playbook for Production-Grade RAG

Learn 7 proven tactics for building effective RAG pipelines, improving document retrieval, structuring context, and keeping AI answers aligned with..

There was a time when someone could build a “RAG system” in an afternoon, connect an LLM to a vector database, add a few PDF files, and proudly call it “production ready”.

That era is gone.

In 2026, the gap between a weekend demo and a production AI product is no longer a chasm – it’s a valley.

And teams that still consider RAG a beautiful experiment are discovering something the hard way:

Prototypes survive demos.

Systems survive reality.

Reality is messy.

Customers ask vague questions. They are asking the wrong question. They ask multi-step questions. They reference old data. They expect accuracy. They expect reliability. And they expect systems to never figure things out.

Today’s winning companies understand something simple:

That means design, discipline, testing, rails, observability, change control – all things we already know from decades of software engineering.

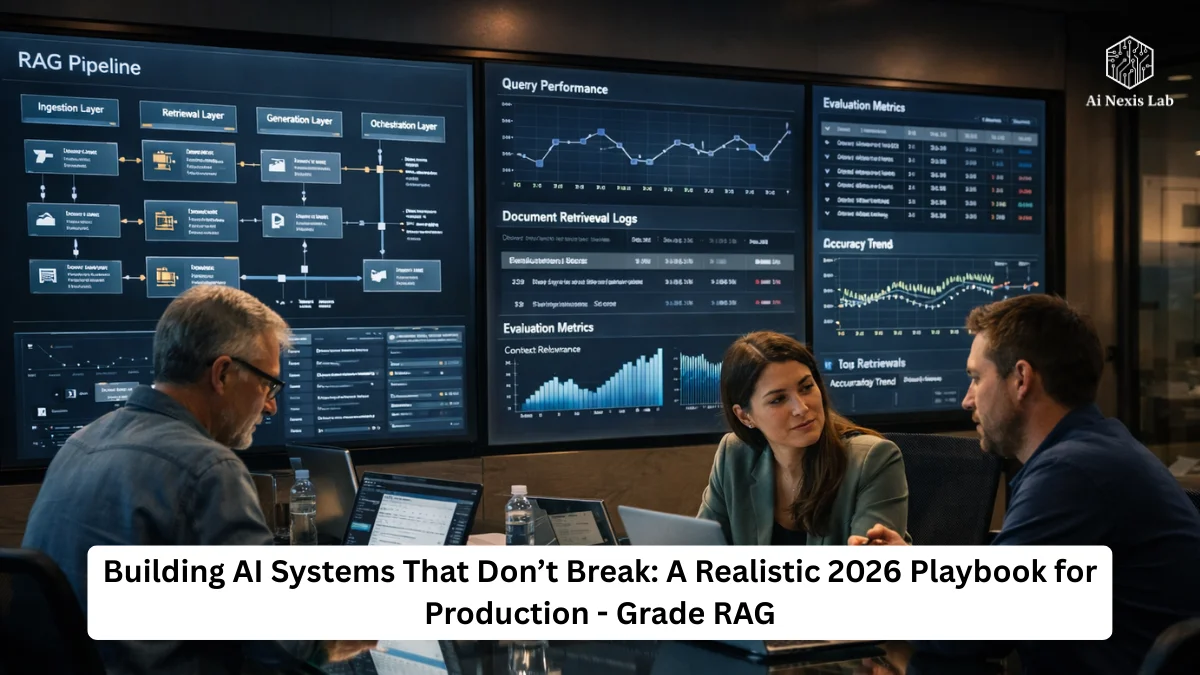

And to build truly sustainable systems, modern AI engineering revolves around two pillars:

- The 4-tier RAG pipeline – the “how”

- The Evals framework – the “proof”

If you don’t have both, you don’t have a system. You have a demo waiting to break.

Let’s break it down.

Part 1: How Real RAG Systems Are Built Today

Most people still talk about RAG as if it were a single thing:

“Take a user question → get some parts → ask an LLM”.

It sounds simple.

That’s why many implementations get confused, miss important context, or drown themselves in irrelevant documentation.

In fact, a modern RAG is a multi-stage logic pipeline designed around one goal:

Maximize the usable signal. Minimize useless noise.

Think of it as four layers working together:

- Ingestion – how knowledge enters the system

- Retrieval – how knowledge is discovered

- Production – how knowledge becomes an answer

- Orchestration – how the system orchestrates complex logic

Once you see the pipeline this way, many of the “mysterious errors” suddenly make sense.

1. Ingestion Level

Where most systems fail before they even start

You can’t create accurate answers on random input.

Garbage in. Garbage out. No magic model will save you.

The ingestion layer is the intelligent librarian – it determines:

- How documents are split

- What metadata they carry

- How they are indexed

- And finally… how searchable they are later

Meaningful (not stupid) chunking

Old-fashioned chunking:

“Split text every 500-1,000 characters”.

The result? Sentences split in half, ideas fall apart, sections lose meaning.

Modern systems use semantic or agentic chunking instead.

A small helper model analyzes the document and places breakpoints where topics naturally change:

- Price → separate chunk

- Architecture → separate chunk

- Security → separate chunk

Now each chunk is a complete idea, not an accidental slice.

This single improvement often dramatically increases the consistency of the recovery.

Metadata is not extra. It is everything.

We no longer store “just text”.

We store context about text – and that changes everything.

Smart Ingestion Pipelines now add:

Document-level summaries

A global overview that tells the retriever what the entire document is about.

Artificial Questions

We generate example questions that each part can answer:

- “What are the security requirements?”

- “How does price change by consumption level?”

These pseudo-questions act like shortcuts, making future retrieval more accurate.

Freshness and Time Awareness

In fast-moving domains, outdated information is dangerous.

Temporal tags allow the system to say:

“Ignore this – it’s older than the new policy”.

Suddenly your chatbot stops citing 2023 rules while the world moves on.

2. Retrieval Layer

The “Scout” that actually finds the answer

Once the library is set up, the question becomes:

“Can the system find the right path – and ignore the wrong paths?”

In 2026, relying solely on vector search is considered rookie-level engineering.

Vector is powerful, but it is ambiguous. They match “meaning”, not precision.

That’s why modern recovery is hybrid.

Hybrid Retrieval = Semantic + Keyword

- Vector Search Finds Related Concepts

- BM25 / Keyword Search Finds Specific Words

Example:

A user asks:

“What does error 404-X-92 mean?”

Vector search may return:

“Troubleshooting common HTTP errors“.

Meanwhile, the keyword search returns:

“404-X-92 appears when the authentication token expires”.

Both are important – but only one actually provides the answer.

Hybrid retrieval mixes the two, then intelligently merges the results.

Reranking — The Unsung Hero

Even the best retrievers make noise.

That’s why we rerank.

The cross-encoder model takes:

- User query

- Each retrieved piece

and scores them not just on similarity, but on deep relevance.

The top 2-3 pieces survive.

Everything else remains outside the prompt.

The result?

- Fewer distractions

- Shorter prompts

- Faster responses

- Dramatically improved accuracy

Most teams that adopt reranking say the same thing:

“It felt like flipping a switch on quality”.

3. Generation Layer

Turning retrieved facts into credible answers

This is where previous systems went off the rails – they treated LLMs as creative writers rather than disciplined analysts.

Production systems enforce rules.

Rule #1: Cite or remain silent

Every meaningful claim points to a source.

If the information is not in the retrieved part…

The model should say I don’t know.

Not:

“I believe…”

“It could be…”

“Usually…”

Grounded systems reward honesty and penalize guesswork.

Rule #2: Structure over Storytelling

In production, your LLM doesn’t just talk to users.

It is also talking to:

- Dashboards

- Downstream tools

- Alerts

- Automation pipelines

So instead of raw text, we return structured JSON or typed objects.

That means:

- Predictable output

- Fewer parsing failures

- Safe automation

- No unexpected excuses for breaking UI components

Your LLM becomes the API, not the poet.

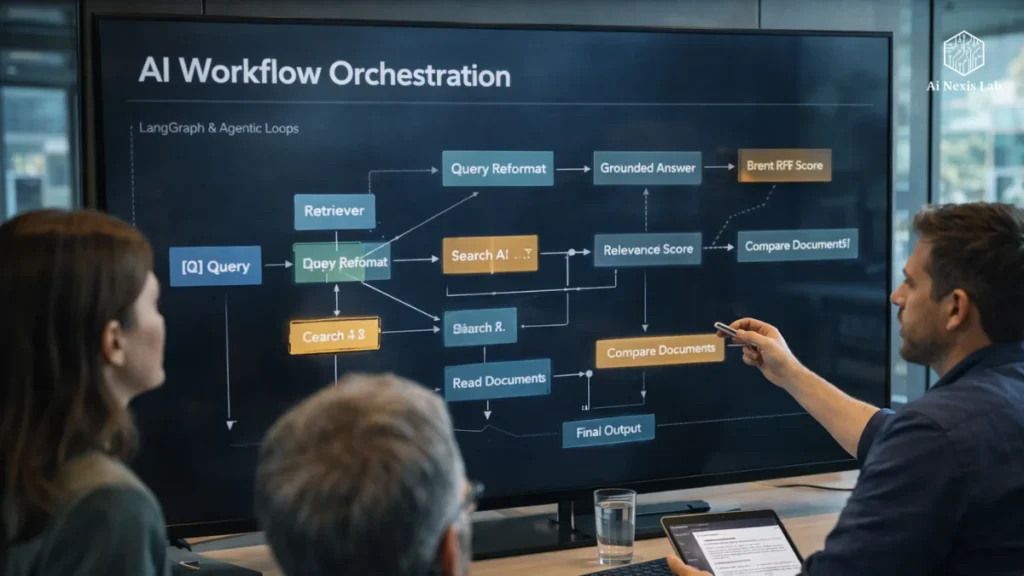

4. Orchestration Layer

When One Prompt Isn’t Enough

Real questions aren’t always single-step.

“Compare our 2024 and 2025 revenue trends”.

It requires:

- 2024 Retrieving data

- 2025 Retrieving data

- Reasoning on both

- Making comparisons

Modern systems use graph-based orchestration to manage this.

The model doesn’t “give it wings” – it thinks in deliberate loops:

- Plan

- Recover

- Reflect

- Improve

- Respond

And because the orchestration framework is now standardized, AI workflows are:

- Auditable

- Repeatable

- Maintainable

Exactly what the enterprise needed.

MCP and Pluggable Reference

The Model Reference Protocol changed the game by making data sources modular.

Instead of writing custom integrations for each tool, MCP features a consistent interface.

So the model can say:

- “Get the latest log”.

- “Check that slack thread”.

- “Ask this table”.

All through a single bridge.

The result?

Less glue code.

Fewer brittle endpoints.

Much more maintainable systems.

Part 2: The Evils — Because “It Looks Good” Isn’t a Metric

Sooner or later someone asks:

“But how do you know if a system is really good?”

In 2026, no one answers:

- “That sounds good”.

- “Users haven’t complained”.

- “The demo worked”.

We measure.

And the industry standard framework revolves around three pillars – the RAG Triad.

RAG Triad

Contextual Consistency

Did the system retrieve the correct paragraphs?

A low score here usually means:

- Poor chunking

- Poor retriever tuning

- Noisy metadata

Fix retrieval. Don’t blame the model.

Fidelity

Did the answer stay in the retrieved sources?

This is the hallucination guardrail.

If loyalty works, it means that:

- Your prompts are weak

- Your model is improvising

- Your citations are not enforced

Production systems treat loyalty like uptime:

Drops are unacceptable.

Answer Relevance

Does the answer actually solve the user’s question?

Sometimes the recovery is complete…

and the model still ignores or ignores the instructions.

Tracks answer consistency.

Creating a realistic evaluation workflow

Senior AI engineers don’t guess. They:

Create a golden dataset

No fluffy questions. Hard questions.

Edge-cases. Legacy-policy questions. Hard ambiguities. Questions that previously misled the system.

Everyone has:

- Correct Answer

- Approved References

- Evaluation Notes

This becomes your permanent regression suite.

Automate CI/CD evals

Whenever code changes:

- Prompt update

- Models update

- Embeddings update

- Rerankers change

The entire suite runs automatically.

What if performance drops?

Merges are blocked.

We do not send regressions. Period.

Use LLM as teachers

Large judge models grade answers – not just scoring, but explaining:

- Why something failed

- Where the context was misused

- Which part was confused

Those explanations spur improvements.

Keep humans in the loop

AI is confident. Reality is not always neat.

So when:

- Reranker scores are low

- Retrievability consistency is uncertain

- Answer confidence is low

The system flags the question.

A human reviews it.

And – crucially – that case goes back to the golden dataset.

Your system learns from its most difficult moments.

Why does all this matter

We have passed the innovation stage.

Businesses don’t care if an AI assistant seems smart.

They worry if:

- It gets the right clause in a 400-page contract

- It gives precise answers

- It doesn’t fake information

- It improves over time

- It’s safe, testable, and auditable

Separate:

- Architecture (how it works)

- Evaluation (proof that it works)

Finally puts AI where it belongs:

Along with any other serious engineering discipline.

And the ironic twist?

When teams finally slow down and build hard…

They move fast.

They debug faster.

They ship with confidence.

They sleep better.

Because they don’t consistently eliminate illusions in production.

Frequently Asked Questions

Q1: Is RAG still relevant as models are getting smarter?

Yes – maybe more than before.

Larger models are more self-confident and seductive.

RAG bases them on your facts, not random internet memory.

And it gives you:

1) Auditability

2) Version control over knowledge

3) Transparency of where answers came from

Even the most robust models benefit from reliable retrieval.

Q2: Can small teams really build a pipeline like this?

Sure – but not by turning everything by hand.

Today’s tool ecosystem makes this framework more accessible:

1) Orchestration frameworks

2) Standardized eval tools

3) Better retrievers

4) Managed vector stores

5) MCP-compliant integrations

What matters is not the size of the team.

What matters is discipline and design.

Q3: How often should we rerun the assessment?

Anytime something meaningful changes:

1) Embeddings

2) Prompts

3) Ranking strategies

4) Model versions

5) Ingestion rules

And on a schedule – weekly or even daily – depending on risk tolerance.

Evaluation is your early warning system.

Q4: Do we always need hybrid search?

If your domain includes any of the following:

1) IDs

2) Codes

3) Contract clauses

4) Legal sections

5) Logs

6) API documentation

Yes — hybrid is non-negotiable.

Vectors alone will miss important precision questions.

Q5: Isn’t JSON output brittle?

Not when it is designed properly.

Structured output:

1) Prevents UI failures

2) Allows automation

3) Simplifies logging and debugging

4) Enforces predictable behavior

And with strong schema validation, the model learns to be consistent.

Q6: What about privacy and security?

Modern AI systems should:

1) Acquisition of sensitive data

2) Isolate environments

3) Log responsibly

4) Ensure access controls extend into the RAG pipeline

Security is not an afterthought.

It’s part of the design.

Final Thoughts

The AI world has matured rapidly.

We replaced the “wow factor” with something more valuable:

Systems that work

Systems we can trust

Systems that improve instead of degrade

If you’re still shipping prototypes dressed up as products, it’s time to level up your pipeline, your evaluations, and your expectations.

Because companies that treat AI as infrastructure?

They are not chasing hype.

They’re building a profit.